by Moira Whelan, Jesper Frant / Apr 21, 2026

Moira Whelan and Jesper Frant serve as fellows for Our Secure Future.

This post was originally posted at https://www.techpolicy.press/women-peace-and-security-frameworks-must-apply-to-defense-ai

AI tools are already operational in multiple conflict zones. The headlines are filled with examples, and a recent report by the Brennan Center for Justice at NYU Law details the extent of the deployment of these tools. The US military has used Project Maven to identify targets for strikes in Iraq, Syria, Yemen, and Ukraine. In Gaza, Israeli forces have relied on AI-generated intelligence to inform strikes that killed scores of civilians, and Claude was reportedly used by US forces during a raid on Venezuela and in strikes on Iran.

States participating in these conflicts have adopted Women, Peace and Security (WPS) frameworks that inform how security decisions are made, but there is no indication those commitments have been extended to the AI systems now informing those same decisions. According to our research, commercially available large language models (LLMs)—the same foundation models now being deployed in defense contexts—systematically fail to operationalize WPS standards, let alone others. How, then, can we be assured that AI systems used in conflict are complying with existing obligations?A central recommendation of the Brennan Center report is to strengthen AI testing, expanding operational evaluation and restoring capacity gutted by recent cuts. But the report stops short of defining what exactly should be tested. Its examples of testing failures are exclusively technical. A next step could be to assess whether these systems produce output that complies with the policy frameworks that already govern the conflicts into which they’re deployed.

This assessment is exactly what drove Our Secure Future’s focus on technology through Project Delphi and the Women, Peace and Security and Technology Futures report. Building on this work, our months-long study of AI systems concluded that AI models customized and evaluated with a robust WPS perspective will deliver higher accuracy in high-stakes, real-world conflict and humanitarian scenarios. We found that models informed by WPS data and policy frameworks reduce operational and strategic blind spots, and enable end-users to make faster, better-informed decisions, because they draw upon more comprehensive, community-wide, and policy-informed information.

Furthermore, our research found that when WPS language is omitted from AI prompts—mimicking the sparse format of actual field situation reports and intelligence briefs—model performance on WPS integration drops by nearly 90 percent. When the models fail to consider women in their analysis, it means the actions they recommend do not factor in these populations. That can have real consequences for, in this case, over 50 percent of the global population.

To come to this conclusion, we tested three leading AI models across 13 conflict scenarios at three levels of contextual detail. When prompts explicitly named affected populations (displaced women, female ex-combatants, women-led organizations), average scores were 0.65 out of 1.0. When prompts used the minimal formats practitioners actually use, the same models scored 0.08. A score below 0.2 indicates the model failed to surface any WPS-based analysis. Trust Building—whether the model recommended engaging affected communities—collapsed from 0.71 to 0.22, a 69 percent decline.

This is not a hypothetical gap. It is a measured disconnect between what WPS commitments require and what deployed AI tools actually produce. It is also an indicator of a seriously flawed system in use by militaries today. As these systems become more capable and more integrated into operational decision-making, the gap will only widen unless proactive measures are taken. Our research demonstrates that closing this gap is technically possible.

AI tools in conflict are failing decision-makers

In July 2025, an Institute for Integrated Transitions (IFIT) AI on the Frontline study tested LLMs on conflict resolution scenarios and found structural performance failures across the board—concluding that current AI models are not fit for high-stakes peace and security decision-making without significant intervention. Critically, a follow-up study found that adding a structured prompt—instructing models to follow basic conflict resolution best practices before responding—increased average scores by 65 percent. IFIT recommends embedding such guidance directly into system prompts, an approach consistent with what Anthropic CEO Dario Amodei terms “Constitutional AI”, which leverages a defined set of principles to align model behavior. This is possible, but we have no evidence to suggest that this is taking place.

Our research independently replicates those findings using a distinct scenario set focused on WPS-relevant conflict contexts and a WPS-specific scoring rubric. Across our MVP agent customization experiment and a WPS AI Benchmark that we tested using Weval.org, we found the same structural failures IFIT documented. “Due Diligence”—whether models recommended consulting affected communities and gathering context before responding—remained consistently low for out-of-the-box AI models that are widely available to the public. The convergence between IFIT’s conflict resolution evaluation and our WPS benchmark establishes these as characteristics of current LLMs in conflict contexts, not artifacts of any single methodology. The problem for decision-makers is plain to see. They are increasingly being directed to use tools that simply do not adhere to existing policies, but with existing processes such as benchmarking and developing agents, we could see better informed decisions in conflict and peace building scenarios.

The WPS competence gap

Our research extends those findings by applying a WPS lens: identifying a specific, quantifiable compliance gap and a documented path to closing it.

We call this the WPS competence gap—the measurable performance drop AI models show when WPS language is absent from operational prompts. No model in our evaluation surfaced WPS considerations unless prompted with explicit contextual cues. This matters because field situation reports, intelligence summaries, and policy briefs rarely contain that framing. This is compounded by the fact that AI tools are designed to produce mid-grade answers. For example, if you ask an AI tool to write a book report, it is likely to give you a “C” grade product, not an “A+”. It is even less likely to give you an analysis of how the dynamics of female characters in the book influence the plot…unless it is directed to do so. In conflict, this means decision makers are getting predictable answers, not doctrinal creativity. It is a behavioral default that has not been reconfigured to meet peace and security standards and models do not apply a WPS lens because nothing requires them to do so.

The compliance framework to close this gap already exists. WPS frameworks—grounded in UN Security Council Resolution 1325 and implemented through National Action Plans (NAPs) in over 100 countries—establish commitments for how conflict and security operations should account for women, protect civilian populations, and include affected communities in decision-making. NATO has integrated WPS into doctrine. The US, UK, and most major allied defense establishments have signed NAPs that apply to their operations. Yet we have seen no indication that procurement and deployment of AI tools integrate this doctrine into technical requirements. The tools decision-makers rely on are not built on the same standards on which they have been professionally trained.

Closing the gap is a configuration problem, not a capability problem

Organizations evaluating AI vendors for conflict-relevant applications should not just be asking whether a model is generally capable. They should be asking whether it has been configured and validated against their own policies and standards—and demanding evidence.

Our experiment tested four configurations of the same model against a common prompt, evaluated by AI judges and confirmed by a WPS expert review. The results show a clear customization ladder:

| Configuration | Performance |

|---|---|

| Off-the-shelf — standard chatbot, no customization | C– / D — generic output, omits WPS |

| + WPS instructions — detailed system prompt with principles on how to apply a WPS lens; no added knowledge | B — mentions women, thin on evidence and policy depth |

| + Retrieval augmentation with evidence base — connected to curated WPS research and field case studies | B+ — substantive analysis grounded in real evidence |

| + Retrieval augmentation with National Action Plans — connected to country-specific policy commitments | A — policy-aligned, WPS KPIs |

Our WPS AI Benchmark takes this analysis one step further, making it an effective mechanism to operationalize WPS compliance as a procurement requirement. Models are scored against a standardized WPS scenario set using a structured rubric, yielding measurable, nuanced, and operational evidence that can be used to improve model compliance. Defense organizations and other entities with WPS obligations should be writing this benchmark into their contractual requirements to specify minimum performance thresholds rather than accepting generic vendor claims of “ethical AI” alignment.

The recent standoff between Anthropic and the Pentagon over autonomous weapons and mass domestic surveillance illustrates a broader structural problem: when AI vendors and defense organizations negotiate deployment boundaries, those conversations tend to play out in terms of broad use policies and ethical principles—not measurable, domain-specific performance standards. Without a shared benchmark, procuring organizations have no way to specify what compliant output actually looks like, and vendors have no way to demonstrate it. A WPS benchmark changes that equation. The burden can then shift to the vendor to prove the models they provide actually meet the operational requirements their customers have already committed to.

The default is already a choice

One useful framing likens AI governance to brakes on a fast-moving car—necessary, but always reactive, always trailing the technology. But in WPS-governed contexts, the problem isn’t speed. It’s that the car was never engineered for the road it’s on. Brakes slow it down, but they don’t prevent it from producing structurally flawed output. When an AI system defaults to analysis that omits women, girls and boys in a conflict environment, adding oversight after the fact doesn’t fix the system—it just adds a review layer on top of output that was wrong from the start.

The phrase “oversight hasn’t caught up” frames the gap as a timing problem—as if the standards don’t yet exist and organizations just need more time to develop them. But the standards do exist. The WPS competence gap is not a governance failure that better brakes can catch. It is a design failure: the organizations that wrote the procurement specs, chose the vendors, and decided what standards to require did not require WPS compliance. It is an omission with measurable consequences for operational effectiveness and the protection of civilian populations.

How do we fix it?

Our Secure Futures research is ongoing. The WPS AI Benchmark is an open evaluation framework—the scenario set, evaluation criteria, and methodology are publicly available.

A first step would be to use this model to expand into other areas that govern conflict such as broader human security and to require through laws and policies that procurements adhere to existing standards with evidence produced to confirm this.

Second, advisors within organizations need to become experts. Sadly in the case of our work, it is increasingly clear that commanders are relying more on AI tools than the WPS advisors that exist in the command structure. This is something decision-makers can fix. Training, empowering, and resourcing WPS Advisors to concentrate their energy on influencing the AI tools would not only produce better decision-making almost immediately but would serve as an organizational model for other areas such as human security, humanitarian response and localization.

Third, we know commanders rely on AI tools for speed, but experiments such as this one took hours, not months. Empowering academic partners and outside groups to test assumptions—just as is done in doctrine development—is critical to the process.

We have an important role to play by building benchmarks to evaluate the operational readiness and effectiveness of LLMs. Jack Clark, co-founder of Anthropic, recently said: “Give us a goal. The AI industry is excellent at trying to climb to the top of benchmarks. Come up with benchmarks for the public good that you want.” It’s clear that AI has already entered the battlefield, but humans are still in control. The decision about which humans are empowered to influence the direction of AI systems that can determine war and peace needs to be made now.

NOTE: This post references results for the fourth iteration of its benchmark. Those results can be accessed at weval.org. The WPS AI Agent is available for demonstration at https://wps-agent.streamlit.app.

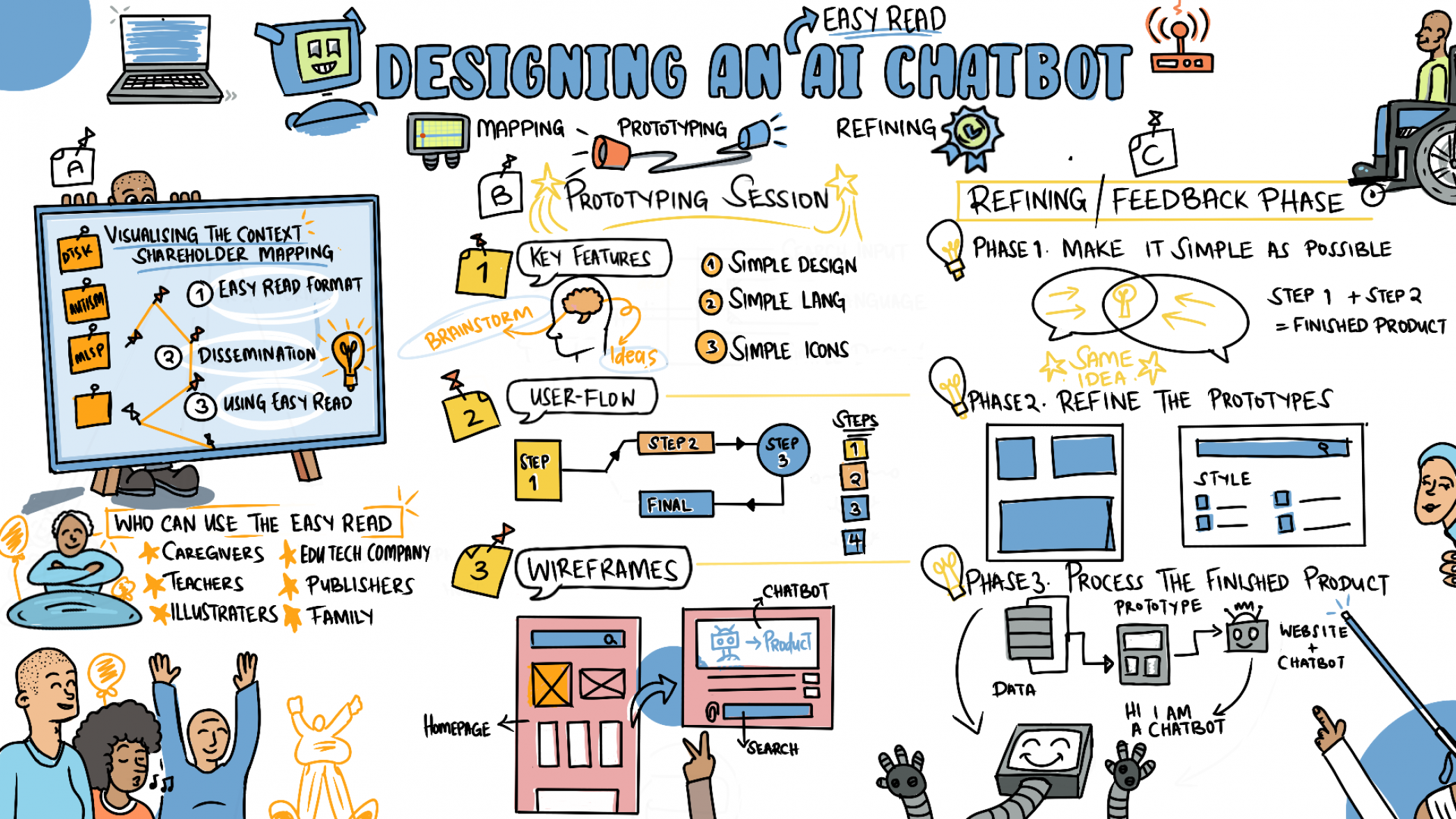

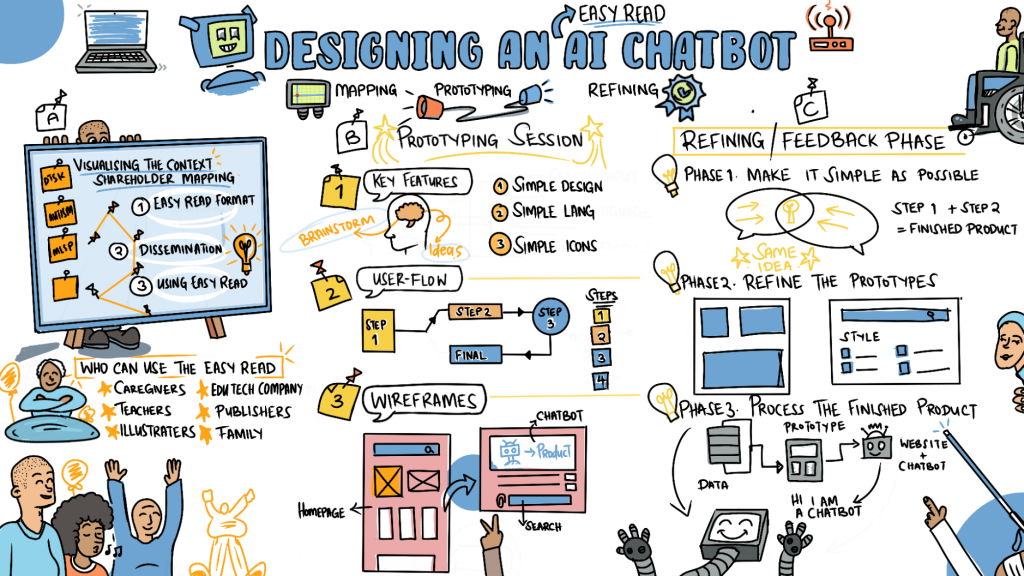

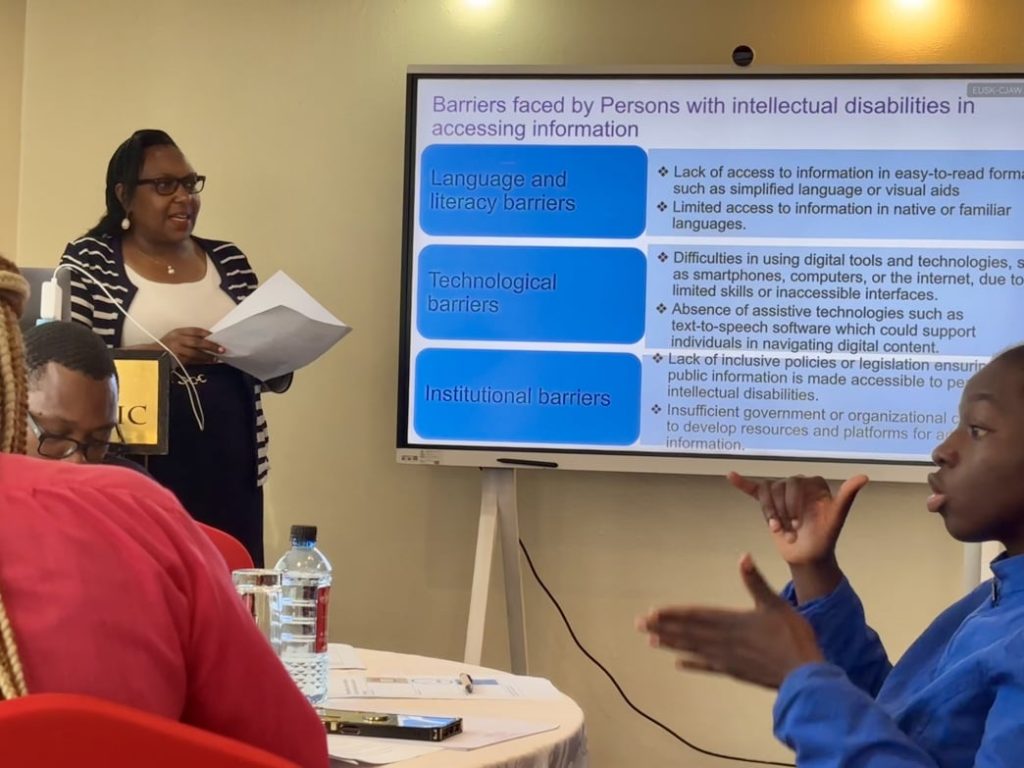

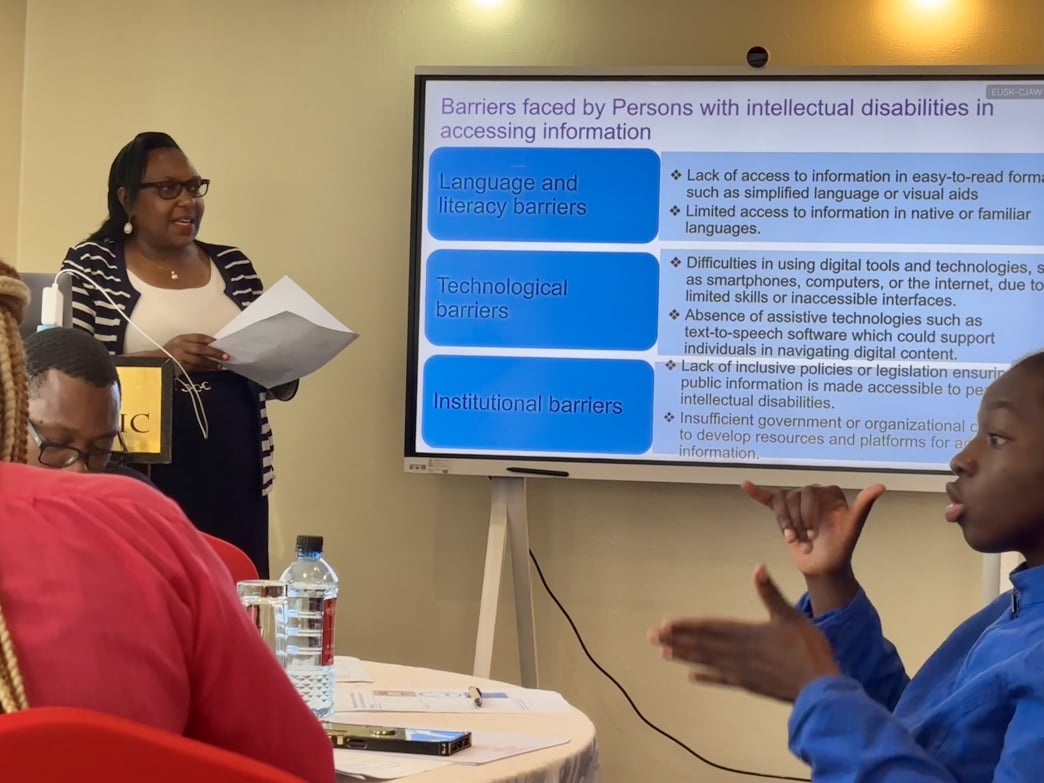

Twenty representatives from various disabled people’s organizations (DPOs) and other civic groups contributed their diverse perspectives and expertise to advance information accessibility in Kenya. These groups included the United Disabled Persons of Kenya (UDPK), the Kenya Association of the Intellectually Handicapped (KAIH), Kenya ICT Action Network (KICTANet), Differently Talented Society of Kenya (DTSK), Black Albinism (BI), Ubongo Kids, Down Syndrome Society of Kenya (DSSK), Kenya Sign Language Interpreters Association (KSLIA), the Kenya National Association of the Deaf (KNAD), and the Directorate of Social Development under the Ministry of Labour and Social Services. The event fostered collaboration and laid the foundation for further development of accessible digital tools in the country.

Twenty representatives from various disabled people’s organizations (DPOs) and other civic groups contributed their diverse perspectives and expertise to advance information accessibility in Kenya. These groups included the United Disabled Persons of Kenya (UDPK), the Kenya Association of the Intellectually Handicapped (KAIH), Kenya ICT Action Network (KICTANet), Differently Talented Society of Kenya (DTSK), Black Albinism (BI), Ubongo Kids, Down Syndrome Society of Kenya (DSSK), Kenya Sign Language Interpreters Association (KSLIA), the Kenya National Association of the Deaf (KNAD), and the Directorate of Social Development under the Ministry of Labour and Social Services. The event fostered collaboration and laid the foundation for further development of accessible digital tools in the country.