Click here to download an Easy Read version of this blog post.

Five years ago, a team of NDI colleagues pitched an idea called “Right To Know” at an internal innovation competition, the culminating project of an internal course on Democracy and Technology (DemTech 1000) I organized. The concept, led by Whitney Pfeifer, was straightforward: build a tool that could translate complex civic documents into Easy Read format—short sentences, plain language, paired with clear illustrations—so that people with intellectual disabilities could access the same information as everyone else. The team won, the idea got a small innovation grant, and what followed was a long, winding road to a working product that I’m only now finally able to share.

The Easy Read Generator is now officially a thing!

What Easy Read Is

Easy Read is a method of presenting information in a format that’s easier to understand. It combines simple language with images that reinforce the meaning of each sentence. It’s valuable for people with intellectual disabilities, low literacy levels, or limited fluency in the language being used—but it’s also just good communication practice more broadly.

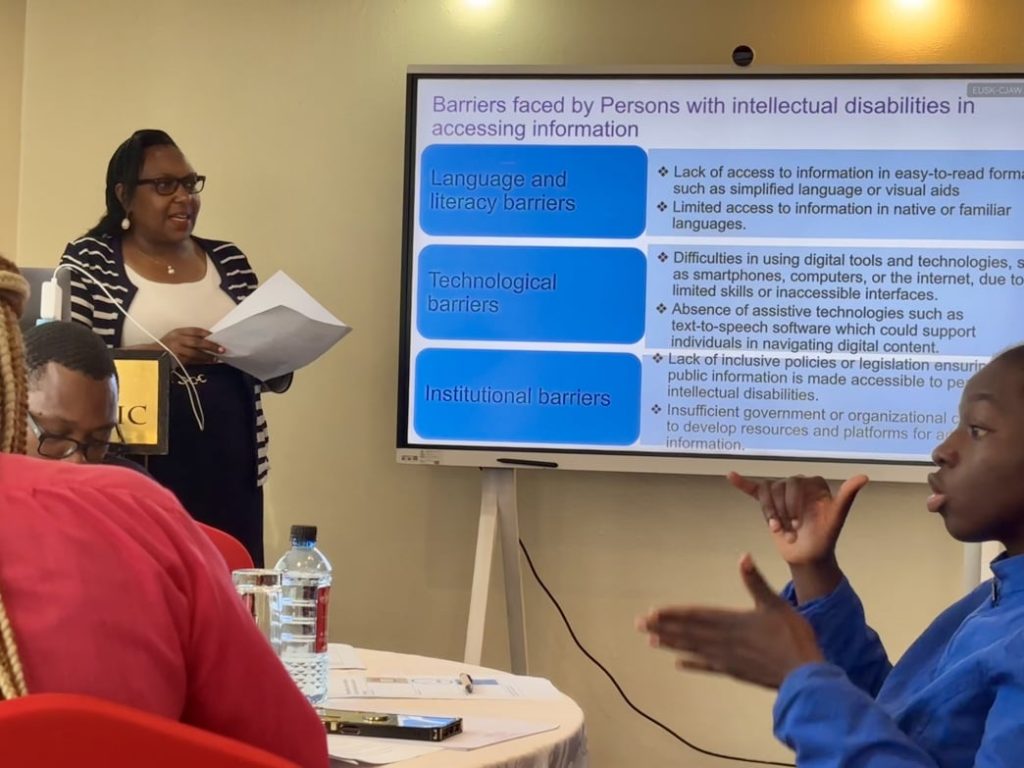

Article 21 of the UN Convention on the Rights of Persons with Disabilities guarantees the right to accessible information. In practice, though, Easy Read materials are expensive and time-consuming to produce, which means they’re rarely created—especially in lower-income countries where the need is greatest and the resources are thinnest.

An Idea Ahead of Its Time

The “Right To Know” pitch happened in 2021—more than a year before ChatGPT launched and kicked off the modern era of generative AI. The team envisioned a tool that could take dense policy language and automatically simplify it, but the technology to do that reliably didn’t exist yet. When ChatGPT arrived in late 2022, the concept Whitney’s team had imagined suddenly became technically plausible. With the innovation grant, we built a first version: a static site at easyread.demcloud.org with detailed instructions on how to use generative AI tools to accelerate Easy Read document creation.

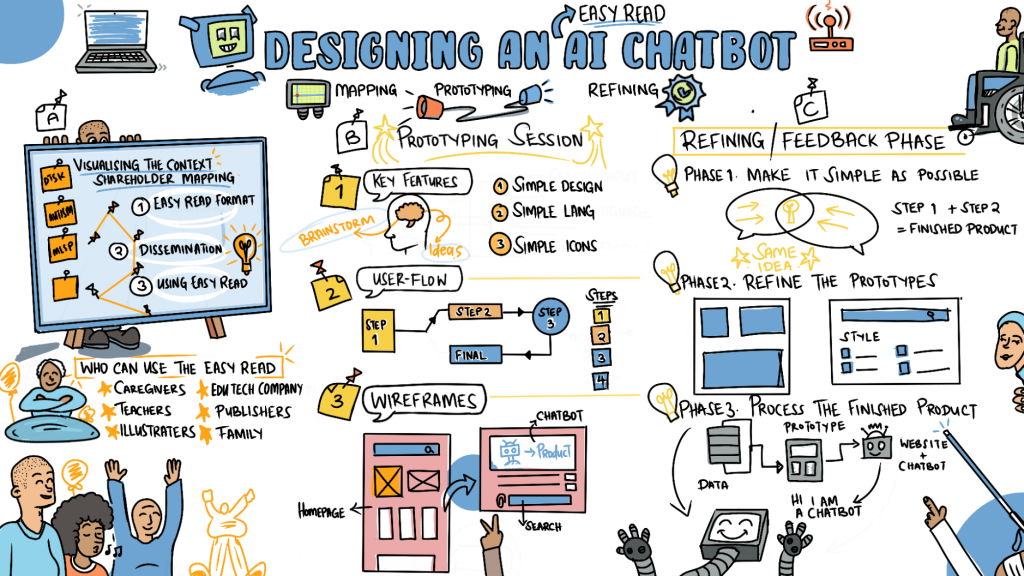

In October 2024, I traveled to Nairobi, Kenya, to facilitate a human-centered design workshop with representatives from disabled people’s organizations (DPOs) including the United Disabled Persons of Kenya, the Kenya Association of the Intellectually Handicapped, the Down Syndrome Society of Kenya, and several others. Over two days, we tested assumptions about accessible information, explored what generative AI could and couldn’t do, and collaboratively designed the features an Easy Read generator tool would need.

One moment from that workshop has stayed with me. A teacher who supports students with Down Syndrome said: “I wish I knew about this before. This will help a lot. I struggle to break down complex jargon into understandable information. With this tool, that work becomes easier.”

Continuing After the Layoff

In January 2025, I was laid off from NDI after nearly 11 years. The Easy Read Generator was not finished. The workshop participants had given us a clear mandate and a thoughtful design, and I had made commitments to them and to the DPOs we were working with. I continued the work on my own and worked with University of Maryland students who contributed concepts for Easy Read Generator’s UX redesign.

The Image Problem

Most people who encounter Easy Read for the first time assume the images are supplementary—nice to have, but not essential. They’re not. In Easy Read, each illustration exists to support the comprehension of a specific sentence. If the image doesn’t clearly represent the concept in the text, it can actually make the document harder to understand, which is the opposite of the goal.

When I first tried to build the generator, I assumed AI image generation would handle this, but current AI image generators are weak at producing the kind of clear, simple illustrations that Easy Read requires. The images they generate tend to be too detailed, too stylistically inconsistent, too prone to visual noise, and often imbued with cultural biases that undermines comprehension. Closing that gap would have meant training a custom image generation model—far beyond what I could take on as a solo developer working on a civic tech side project.

That failure stalled the project for months. I tried multiple approaches, multiple tools, multiple prompting strategies. None of them produced images I’d feel comfortable putting in front of the people this tool is meant to serve.

Selecting Instead of Generating

The thing that eventually unblocked the project was a shift in approach. Instead of asking AI to generate images, I started asking it to select them.

I built a keyword-mapped image library—a JSON file containing 564 keywords mapped to 186 unique illustrations drawn from three open-licensed sources:

- Mulberry Symbols—a widely-used symbol set designed for augmentative and alternative communication (AAC), licensed under CC BY-SA 2.0 UK

- OpenMoji—an open-source emoji library with clean, consistent line art, licensed under CC BY-SA 4.0

- NDI’s Easy Read Online Dictionary—illustrations collected through NDI’s own Easy Read program, licensed under CC BY-SA 4.0

When a user pastes text into the Easy Read Generator, the LLM does two things: it simplifies the language into short, clear sentences, and it matches each sentence to the most appropriate illustration from the library using the keyword map. The AI isn’t creating images—it’s making selections from a curated set of symbols that were designed for this purpose by people who understood accessible communication.

The library doesn’t cover every possible concept, and some matches are better than others. But every image in the output was created by designers who understand accessibility, not hallucinated by a model optimizing for visual plausibility.

Where This Leaves Me

The tool I shipped is not what I originally envisioned. It’s simpler, more constrained, and more honest about what current AI tools can and can’t do. I think it’s better for it. My earlier attempts were too ambitious, and the image generation requirement exceeded what the technology could responsibly deliver. Stripping back to the core problem—simplify text, match it to existing illustrations—turned out to be enough.

After contributions from countless people, I’m relieved that I was finally able to deliver a working prototype. The Easy Read Generator will remain free to use, no login required, as long as I’m able to host and improve it. If this tool is useful to you or your organization, consider supporting the project.